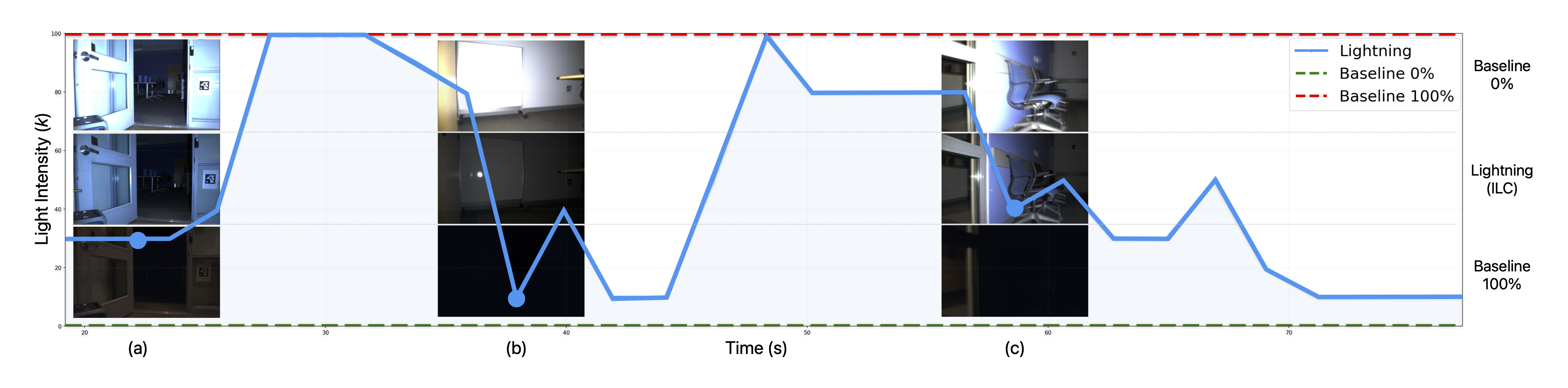

Figure 5: ILC's intensity schedule versus fixed baselines. The imitation policy, deployed on a robot, outputs a per-frame light intensity (blue), and is compared against 0% (green-dashed) and 100% (red-dashed) fixed-intensity baselines. Insets show frames at the corresponding timestamps: (a) ILC increases illumination when entering a low-light region to preserve image utility; (b) ILC reduces illumination near a reflective whiteboard to mitigate specular saturation; (c) ILC chooses an intermediate illumination level to balance competing effects of low light and specular reflection.

We compare our method (Lightning) against fixed intensity baselines (0% and 100%). Lightning consistently outperforms both baselines in terms of trajectory completion ratio and Weighted RMSE.

| Sequence | 0% Baseline | Lightning (Online) | 100% Baseline | Lightning Stats | ||||

|---|---|---|---|---|---|---|---|---|

| Ratio (C) ↑ | WRMSE ↓ | Ratio (C) ↑ | WRMSE ↓ | Ratio (C) ↑ | WRMSE ↓ | Light μ (%) | Power (W) | |

113dark |

0.26 | 2.756 | 0.89 | 1.405 | 0.44 | 0.641 | 48.28 | 19.86 |

kitchenloop |

0.48 | 0.744 | 0.91 | 0.393 | 0.88 | 0.425 | 60.42 | 22.45 |

start2corr |

0.95 | 0.220 | 0.99 | 0.123 | 0.98 | 0.249 | 47.33 | 19.66 |